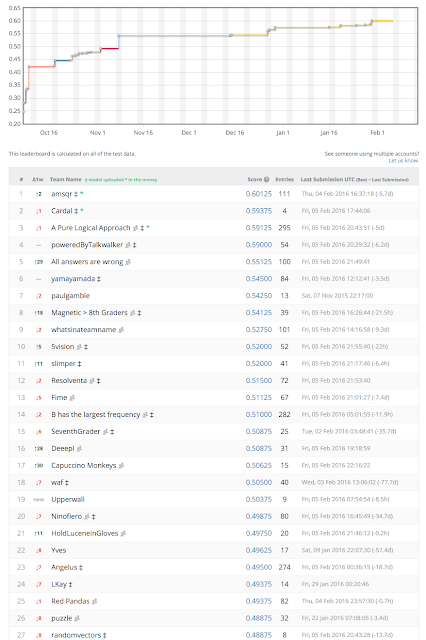

In 2015 I took part on a machine learning competition hosted on Kaggle aiming to solve a multiple-question 8th grade science test. At that time there weren't large pretrained models to leverage and (unsurprisingly) best performing models were IR-based that would barely achieve a GPA of 1.0 in the US grading system:

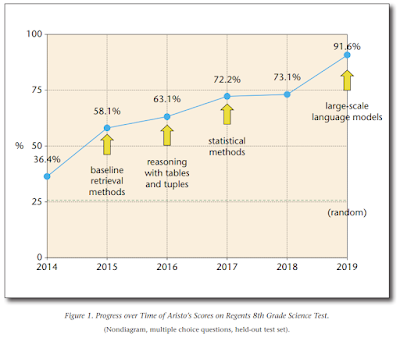

However, several years later (and several thousands of $$$ spent training large Transformers), Allen AI researchers reported in 2020 substantially better results using either BERT or RoBERTa based QA solvers. This major breakthrough means that a QA system leveraging publicly available language models and training data could achieve 90%+ (GPA-4) in a similar 8th grader test:

References

Project Aristo: Towards Machines that Capture and Reason with Science Knowledge

From ‘F’ to ‘A’ on the N.Y. Regents Science Exams: An Overview of the Aristo Project

https://orcid.org/0000-0002-6020-3569

https://orcid.org/0000-0002-6020-3569

No comments:

Post a Comment